Rise of MLOps monitoring in 2022

(P) Codever is an open source bookmarks and snippets manager for developers & co. See our How To guides to help you get started. Public bookmarks repos on Github ⭐🙏

Machine Learning is used in practically every industry these days, which is associated with Artificial Intelligence. Machine learning is used in a variety of fields, including medicine, eCommerce, and science. It is not difficult to find the most up-to-date machine learning tools, methodologies, procedures, and systems to construct Machine Learning Models to address a problem. The major challenge is keeping these models up to date on such a large scale. This article will explain the rise of MLOps monitoring in 2022.

The current paradigm in machine learning development depends on the cooperation of 4 main areas of study: Big Data, Data Science, Computer Engineering, and Machine Learning.

Issues in the previous Systems

Software in the past has been reliable. Probabilistic systems are used in Machine Learning. If well-maintained, these models can continue to get better as data expands. The risky side is that these models might decline as soon as they are fully implemented. The main reason seems to be unanticipated or unobserved modifications in your dataset, syntax, as well as other features could also arise, resulting in model failure. Although data changes in the actual world, modifications can be high and result in a considerable, sometimes unmeasured predictive model.

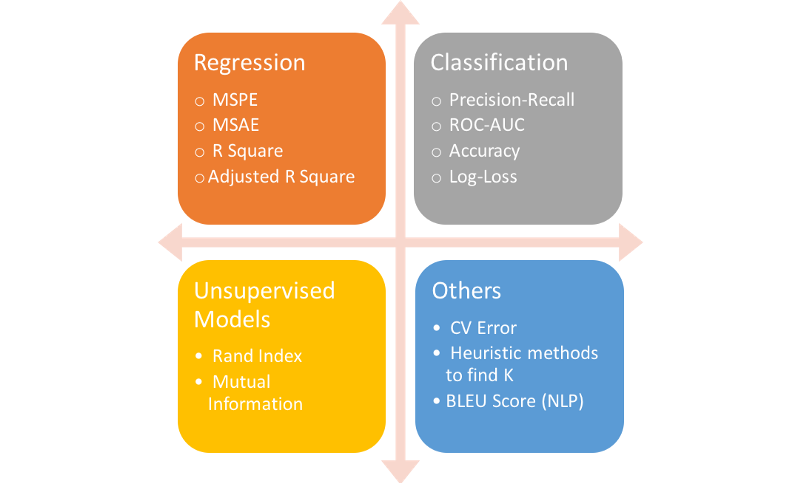

It also implies that the Proposed method tackles a few distinct and difficult tasks. To begin with, models frequently fail quietly. In certain terms, today’s machine learning systems lack the equivalence of program breakdowns and error effectively. Furthermore, because machine learning techniques are uncertain, Metrics are more difficult to define “out of the gate.” As a reason, you can’t necessarily state “put extra computing power” to solve an overflowing CPU. We divide ML algorithms operations monitoring into 3 types relying on these concerns: information, algorithm, and program.

Use of MLOps

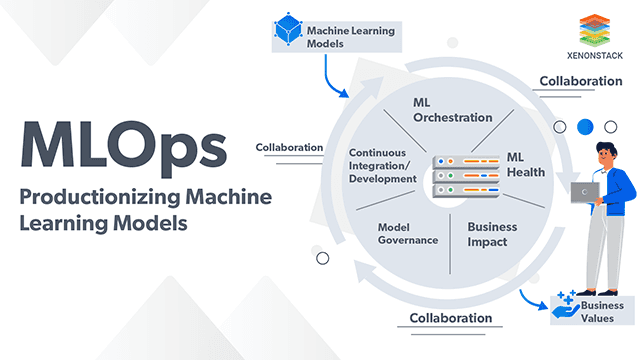

MLOps aims to derive corporate insights from data by quickly scaling up machine learning ML model delivery. To ensure MLOps performance, many firms have created a new role called ML engineer. Even though a machine learning engineer may uncover a business-valued solution, getting it into execution necessitates a different set of capabilities. The ML programmer creates ML workflows that can consistently, cheaply, accurately, and even at size duplicate the outputs of the data scientist’s algorithms and ML production.

Rise of MLOps

The importance for ‘MLOps’ or what drives to the development of this proposed method in the present era of Artificial Intelligence is very evident from the preceding information. From ‘Why’ to ‘When,’ we are now moving on to ‘How.’ First let’s examine the factors that contributed to the emergence of MLOps in the first place.

Multiple Workflows

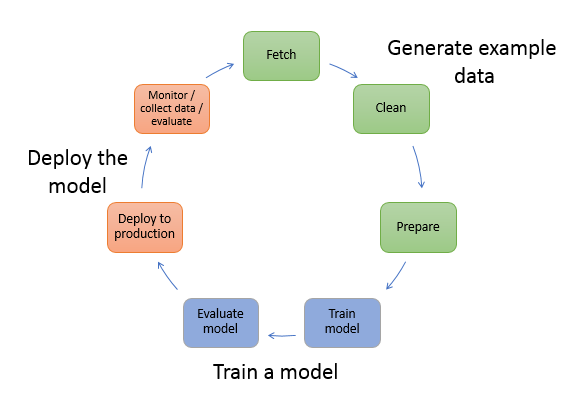

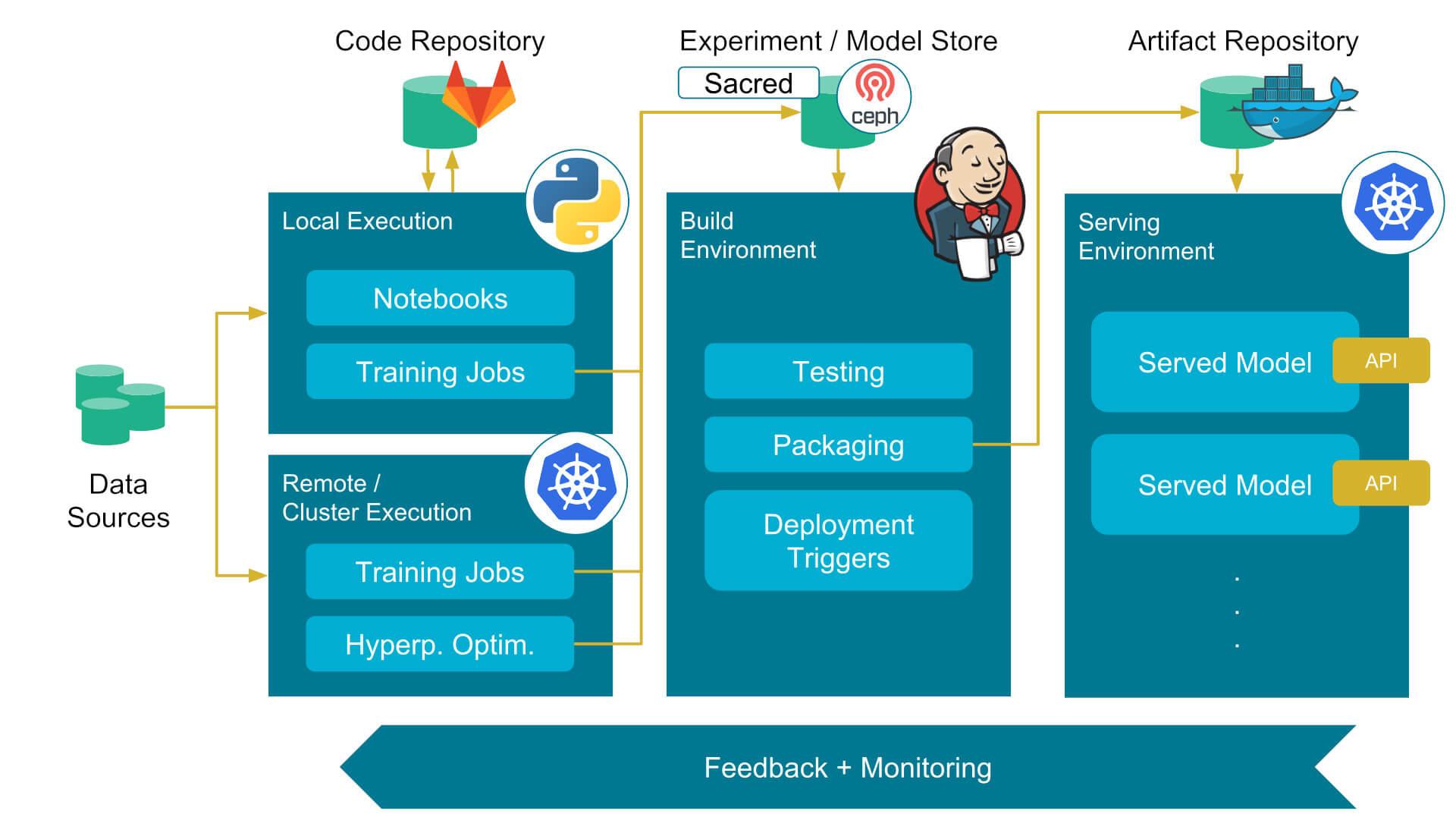

Machine learning models are not created with a single program file. Rather, it entails the collaboration of various processes, each with its own set of responsibilities. Workflows for the major mechanisms involved in the development of a ML system, such as pre-processing, feature extraction, model construction, and deploy ML model. MLOps are critical for the basic coordination of these many processes to update the model periodically.

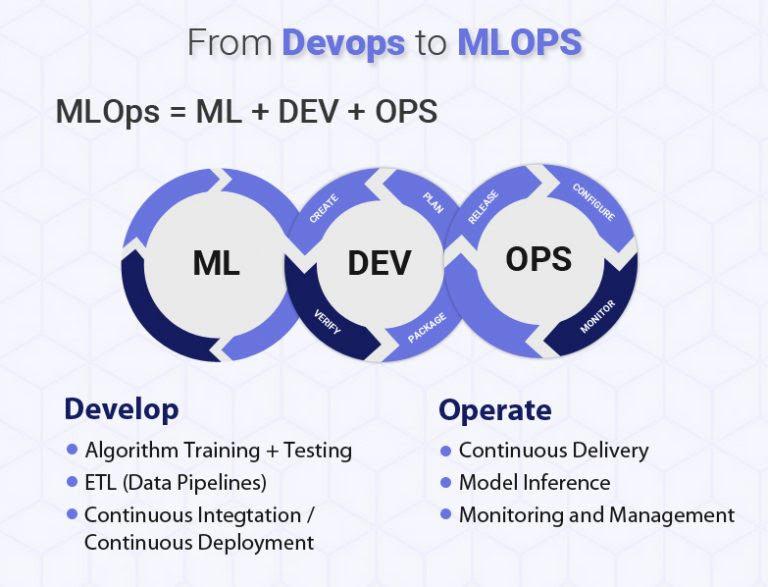

Management of MLOps

The ML lifecycle model is made up of several parts, each of which should be treated as a different software component. Everyone has their management and maintenance concerns, something DevOps usually addresses, but controlling them using typical DevOps approaches is difficult. MLOps is a novel method that combines individuals, procedures, and tools to enable machine learning models to be developed and deployed quickly and safely.

MLOps versus ML Maintenance

The most important component of the post-process is monitoring ML health after models have been installed. It is critical to ensure that ML models are properly run and controlled. MLOps delivers the most up-to-date ML health approaches by automating the identification of various errors. It can enable the opportunity to utilize the system’s most up-to-date margin clipping tools to identify these fluctuations, allowing them to be eliminated well before they begin to damage performance and machine learning operations.

ML Applications on a Large Scale

As previously stated in this article, the building of models is not a cause for concern; rather, the true challenge is model management at large. Managing hundreds of models on one is a time-consuming and difficult operation that puts the models’ performance to the test. The use of MLOps is a reasonable approach for handling hundreds of ML production pipelines. But few issues may arise in 2022 for MLOps monitoring.

Discovering Productivity Issues without Actual Tags

When training models on a data set, programmers apply real data to assess the performance of the models and other measures. System statistics cannot be computed to evaluate the actual performance of the model without actual tags.

Specifying data quality issues and risks

Sophisticated models are upthrust by deep hierarchical flowlines and process automation that entail real-time data that undergoes different scenarios with so many working components, it’s not entirely unusual for data contradictions & inconsistencies to damage model performance, get any attention.

Machine learning models are intelligent systems

The dark nature of machine learning models makes them highly challenging for ML experts to interpret and troubleshoot, especially in a live environment.

Computing genuine metrics

Keeping track of a model choice or result might provide valuable direction. To accomplish so, the tags or results must first be processed, then connected with expectations, and then compared to a previous time frame. In most monitoring tools, metrics are monitored in real-time, which means that information is passed too late to be useful.

Error

Because machine learning models record associations from input samples, they are likely to spread or magnify current data bias or even create new prejudices. Assessing actual distortion once a model is released, as well as examining bias concerns in detail, requires a while and is subject to mistakes. Teams can run past data through the current model or run the new model alongside the previous model when implementing the latest concept. Both methods have the potential to be a fatal error and time-consuming.

These difficulties highlight the vital need for a tool to monitor and ML implementation to not only identify practical concerns but also to assist in revealing basic issues. MLOps monitoring tools are essential to help businesses continue to use AI by allowing teams to simplify ML model installations with actual insight and quick repair.

Conclusion

Finding the most up-to-date machine learning tools, approaches, procedures, and systems to build Machine Learning Models to solve a problem is not difficult. On such a broad scale, keeping these models up to date is a big challenge. MLOps monitoring tools are important for organizations to continue to embrace AI by allowing teams to ease ML model deployments by providing real-time insight and speedy correction. It may be possible to identify these oscillations using the system’s most up-to-date margin clipping capabilities, allowing them to be eliminated well before they begin to harm performance and machine learning activities.